Social Media Platforms

Meta announces new guidelines for teens on Instagram-Facebook

Implementation of the new polices means teens will see their accounts placed on the most restrictive settings on the platforms

MENLO PARK, Calif. – Social media giant Meta announced Tuesday that new content policies for teens restricting access to inappropriate content including posts about suicide, self-harm and eating disorders on both of its largest platforms, Instagram and Facebook.

In a post on the company blog, Meta wrote:

Take the example of someone posting about their ongoing struggle with thoughts of self-harm. This is an important story, and can help destigmatize these issues, but it’s a complex topic and isn’t necessarily suitable for all young people. Now, we’ll start to remove this type of content from teens’ experiences on Instagram and Facebook, as well as other types of age-inappropriate content. We already aim not to recommend this type of content to teens in places like Reels and Explore, and with these changes, we’ll no longer show it to teens in Feed and Stories, even if it’s shared by someone they follow.

“We want teens to have safe, age-appropriate experiences on our apps,” Meta said.

Implementation of the new polices means teens will see their accounts placed on the most restrictive settings on the platforms, the caveat being that the teen didn’t lie about their age when they set the accounts up.

Other changes the company announced include:

To help make sure teens are regularly checking their safety and privacy settings on Instagram, and are aware of the more private settings available, we’re sending new notifications encouraging them to update their settings to a more private experience with a single tap. If teens choose to “Turn on recommended settings”, we will automatically change their settings to restrict who can repost their content, tag or mention them, or include their content in Reels Remixes. We’ll also ensure only their followers can message them and help hide offensive comments.

In November, California Attorney General Rob Bonta announced the public release of a largely unredacted copy of the federal complaint filed by a bipartisan coalition of 33 attorneys general against Meta Platforms, Inc. and affiliates (Meta) on October 24, 2023.

Co-led by Attorney General Bonta, the coalition is alleging that Meta designed and deployed harmful features on Instagram and Facebook that addict children and teens to their mental and physical detriment.

Highlights from the newly revealed portions of the complaint include the following:

- Mark Zuckerberg personally vetoed Meta’s proposed policy to ban image filters that simulated the effects of plastic surgery, despite internal pushback and an expert consensus that such filters harm users’ mental health, especially for women and girls. Complaint ¶¶ 333-68.

- Despite public statements that Meta does not prioritize the amount of time users spend on its social media platforms, internal documents show that Meta set explicit goals of increasing “time spent” and meticulously tracked engagement metrics, including among teen users. Complaint ¶¶ 134-150.

- Meta continuously misrepresented that its social media platforms were safe, while internal data revealed that users experienced harms on its platforms at far higher rates. Complaint ¶¶ 458-507.

- Meta knows that its social media platforms are used by millions of children under 13, including, at one point, around 30% of all 10–12-year-olds, and unlawfully collects their personal information. Meta does this despite Mark Zuckerberg testifying before Congress in 2021 that Meta “kicks off” children under 13. Complaint ¶¶ 642-811.

The Associated Press reported that critics charge Meta’s moves don’t go far enough.

“Today’s announcement by Meta is yet another desperate attempt to avoid regulation and an incredible slap in the face to parents who have lost their kids to online harms on Instagram,” said Josh Golin, executive director of the children’s online advocacy group Fairplay. “If the company is capable of hiding pro-suicide and eating disorder content, why have they waited until 2024 to announce these changes?”

Social Media Platforms

Queer Mercado taking steps to right their wrongs

As part of that action plan, the Mercado released a survey to the community to gain a better understanding of community needs going forward

Earlier this year, the organization expressed transphobic remarks on social media through the Queer Mercado Instagram account. The co-founder Diana Díaz, says she trusted the wrong person to run that account and represent the Queer Mercado and also says that the person who made the comment didn’t realize they were commenting through the brand’s account.

Díaz says she believes that she is now making better decisions to benefit Queer Mercado and continue nurturing it, so it can continue growing. She is open to conversations regarding the event and how to make it a safer space for the communities involved.

In an interview with Díaz, she said she was inspired to create this space because as a school counselor for K-12 public school education in Boyle Heights, she was the first person that parents would go to when their child would come out as queer. Her students trusted her as an ally to go to when they felt like they needed support as queer and trans children.

“I worked at all the local school districts as a school counselor and I got to see how the family would react to their child coming out and it was very painful and very personal to me because I love these kids,” said Díaz.

She said that she couldn’t understand why so many of those parents reacted the way they did, knowing that these children were perfectly healthy and only looking for safety and support during a difficult and confusing time.

Although Díaz admits that she is not part of the LGBTQ+ community, she has strong ties to the community as an ally for children who have to not only deal with coming out and coming to terms with their identities, but who also have to deal with the extra burden of coming out within the Latinx community, which often reinforces misogyny, homophobia and transphobia.

Under this particularly hostile administration, it is rare to find an ally like Díaz, who not only stands up for the most vulnerable members of the LGBTQ+ community, but who also tirelessly works to make spaces like Queer Mercado, where the families of those children can feel welcome to explore these identities and this community in a way that is inclusive of all ages. Díaz says she made this space for the Latinx families and parents of LGBTQ+ children in an effort to build stronger relationships.

“I recruited artists and other volunteers to help me start the market,” said Díaz. “I didn’t see a problem with an ally doing it because there is no other free public space for queer families like [Queer Mercado].”

She also notes that this is the only space designated for art and community, specifically catered to the Latinx community in a city with one of the largest demographics of queer and trans Latinx people.

“I know not every grandma is going to be able to go to The Abbey, you know in West Hollywood, or Precinct. Some of us like to go to bed early.”

Díaz was first the founder of Goddess Mercado and says that when she started it, one of her students asked her about creating a space for LGBTQ+ families and this is when she thought of creating the Queer Mercado. She saw the need for this space and realized she could be the person to bring the representation that was needed.

Díaz comes from a family who made their living as vendors at swapmeets and other community spaces, so a space like this for her is deeply personal.

ChiChi LaPinga, multi-hyphanated activist and community leader in queer and trans spaces, was recently hired as Director of Outreach for the Queer Mercado. They are now in charge of facilitating ideas about how to better the Mercado and make the space as safe as possible for everyone who identifies as a member of the queer and trans communities.

Earlier this year when the Queer Mercado was caught up in this issue, many community members, vendors and attendees who avidly supported the event, said they no longer wanted to support it, if Díaz didn’t step down. Díaz founded the event and continues to believe that she can do more to bridge the gap between hostile families and their queer and trans children, by continuing her efforts as founder.

What she now says, is that she needs to take steps to gain community trust back by bringing in people who are willing and able to learn, grow, evolve and make the space better than ever.

As part of that action plan, the Mercado released a survey to the community in February to gain a better understanding of community needs going forward.

“I think that this [incident] is a perfect example of why there needs to be queer people in positions of leadership – so that people who aren’t part of the queer community like Diana, are guided through the process,” said ChiChi LaPinga.

ChiChi LaPinga is a Mexican, trans and nonbinary community leader and activist in Los Angeles who has built a reputation throughout years, working and representing the queer, trans and Latinx communities.

They say that people like Díaz should be putting people who are queer, who are part of the community, in these positions of influence and power and this incident proves why that is so important and crucial to a space like this.

“It was a very unfortunate situation. It was an error made by ignorance and something that I personally do not condone, right me as a transgender, non binary person, as a decent basic, you know, as a decent human being,” said ChiChi La Pinga. “I am also not the expert on all things, and I rely on my community to educate me on those things, and that is what all allies should do.”

Starting in March, and going forward, ChiChi LaPinga said they have pushed for there to be more panel discussions incorporated into the events where they can discuss issues that affect the community from different perspectives.

“One of the changes that I’ve always wanted to see at the Queer Mercado was to have panel discussions on stage, which is something that I introduced last month and am continuing this month,” said ChiChi LaPinga.

In our candid conversation, ChiChi LaPinga opened up about their own identity and struggles with embracing their identities within a culture that is misogynistic, homophobic and transphobic. They say they understand the community response and push-back for change in leadership, because Queer Mercado should be run by people who are inclusive and accepting of all identities within the LGBTQ+ umbrella.

However, Díaz says she founded the mercado, which is why she hopes to continue leading it, but in a new way that incorporates new voices into conversations about how to move forward.

She saw a need for a space like this and made it happen for her students and their families. She says she hopes that the conversations can continue to help her make better decisions going forward.

Ultimately, ChiChi LaPinga advices the community to make the decision to return to Queer Mercado on their own and only if they feel ready to do so.

“If you do not feel safe in certain spaces, make the decision that is best for you, because I would do the same,” said ChiChi LaPinga.

Social Media Platforms

Instagram battles financial sextortion scams, blurs DM nudity

When sending or receiving these images, people will be directed to safety tips, developed with guidance from experts, about potential risks

Editor’s note: The following article is provided as a public service for readers regarding actions taken by Instagram, a social media platform, dealing with a subject of general interest and concern. The Los Angeles Blade has not verified the information contained herein.

By Meta Public & Media Relations | MENLO PARK, Calif. – Financial sextortion is a horrific crime. We’ve spent years working closely with experts, including those experienced in fighting these crimes, to understand the tactics scammers use to find and extort victims online, so we can develop effective ways to help stop them.

Today, we’re sharing an overview of our latest work to tackle these crimes. This includes new tools we’re testing to help protect people from sextortion and other forms of intimate image abuse, and to make it as hard as possible for scammers to find potential targets – on Meta’s apps and across the internet. We’re also testing new measures to support young people in recognizing and protecting themselves from sextortion scams.

These updates build on our longstanding work to help protect young people from unwanted or potentially harmful contact. We default teens into stricter message settings so they can’t be messaged by anyone they’re not already connected to, show Safety Notices to teens who are already in contact with potential scam accounts, and offer a dedicated option for people to report DMs that are threatening to share private images. We also supported the National Center for Missing and Exploited Children (NCMEC) in developing Take It Down, a platform that lets young people take back control of their intimate images and helps prevent them being shared online – taking power away from scammers.

Takeaways:

- We’re testing new features to help protect young people from sextortion and intimate image abuse, and to make it more difficult for potential scammers and criminals to find and interact with teens.

- We’re also testing new ways to help people spot potential sextortion scams, encourage them to report and empower them to say no to anything that makes them feel uncomfortable.

- We’ve started sharing more signals about sextortion accounts to other tech companies through Lantern, helping disrupt this criminal activity across the internet.

Introducing Nudity Protection in DMs

While people overwhelmingly use DMs to share what they love with their friends, family or favorite creators, sextortion scammers may also use private messages to share or ask for intimate images. To help address this, we’ll soon start testing our new nudity protection feature in Instagram DMs, which blurs images detected as containing nudity and encourages people to think twice before sending nude images. This feature is designed not only to protect people from seeing unwanted nudity in their DMs, but also to protect them from scammers who may send nude images to trick people into sending their own images in return.

Nudity protection will be turned on by default for teens under 18 globally, and we’ll show a notification to adults encouraging them to turn it on.

When nudity protection is turned on, people sending images containing nudity will see a message reminding them to be cautious when sending sensitive photos, and that they can unsend these photos if they’ve changed their mind.

Anyone who tries to forward a nude image they’ve received will see a message encouraging them to reconsider.

When someone receives an image containing nudity, it will be automatically blurred under a warning screen, meaning the recipient isn’t confronted with a nude image and they can choose whether or not to view it. We’ll also show them a message encouraging them not to feel pressure to respond, with an option to block the sender and report the chat.

When sending or receiving these images, people will be directed to safety tips, developed with guidance from experts, about the potential risks involved. These tips include reminders that people may screenshot or forward images without your knowledge, that your relationship to the person may change in the future, and that you should review profiles carefully in case they’re not who they say they are. They also link to a range of resources, including Meta’s Safety Center, support helplines, StopNCII.org for those over 18, and Take It Down for those under 18.

Nudity protection uses on-device machine learning to analyze whether an image sent in a DM on Instagram contains nudity. Because the images are analyzed on the device itself, nudity protection will work in end-to-end encrypted chats, where Meta won’t have access to these images – unless someone chooses to report them to us.

“Companies have a responsibility to ensure the protection of minors who use their platforms. Meta’s proposed device-side safety measures within its encrypted environment is encouraging. We are hopeful these new measures will increase reporting by minors and curb the circulation of online child exploitation.” — John Shehan, Senior Vice President, National Center for Missing & Exploited Children.

“As an educator, parent, and researcher on adolescent online behavior, I applaud Meta’s new feature that handles the exchange of personal nude content in a thoughtful, nuanced, and appropriate way. It reduces unwanted exposure to potentially traumatic images, gently introduces cognitive dissonance to those who may be open to sharing nudes, and educates people about the potential downsides involved. Each of these should help decrease the incidence of sextortion and related harms, helping to keep young people safe online.” — Dr. Sameer Hinduja, Co-Director of the Cyberbullying Research Center and Faculty Associate at the Berkman Klein Center at Harvard University.

Preventing Potential Scammers from Connecting with Teens

We take severe action when we become aware of people engaging in sextortion: we remove their account, take steps to prevent them from creating new ones and, where appropriate, report them to the NCMEC and law enforcement. Our expert teams also work to investigate and disrupt networks of these criminals, disable their accounts and report them to NCMEC and law enforcement – including several networks in the last year alone.

Now, we’re also developing technology to help identify where accounts may potentially be engaging in sextortion scams, based on a range of signals that could indicate sextortion behavior. While these signals aren’t necessarily evidence that an account has broken our rules, we’re taking precautionary steps to help prevent these accounts from finding and interacting with teen accounts. This builds on the work we already do to prevent other potentially suspicious accounts from finding and interacting with teens.

One way we’re doing this is by making it even harder for potential sextortion accounts to message or interact with people. Now, any message requests potential sextortion accounts try to send will go straight to the recipient’s hidden requests folder, meaning they won’t be notified of the message and never have to see it. For those who are already chatting to potential scam or sextortion accounts, we show Safety Notices encouraging them to report any threats to share their private images, and reminding them that they can say no to anything that makes them feel uncomfortable.

For teens, we’re going even further. We already restrict adults from starting DM chats with teens they’re not connected to, and in January we announced stricter messaging defaults for teens under 16 (under 18 in certain countries), meaning they can only be messaged by people they’re already connected to – no matter how old the sender is. Now, we won’t show the “Message” button on a teen’s profile to potential sextortion accounts, even if they’re already connected. We’re also testing hiding teens from these accounts in people’s follower, following and like lists, and making it harder for them to find teen accounts in Search results.

New Resources for People Who May Have Been Approached by Scammers

We’re testing new pop-up messages for people who may have interacted with an account we’ve removed for sextortion. The message will direct them to our expert-backed resources, including our Stop Sextortion Hub, support helplines, the option to reach out to a friend, StopNCII.org for those over 18, and Take It Down for those under 18.

We’re also adding new child safety helplines from around the world into our in-app reporting flows. This means when teens report relevant issues – such as nudity, threats to share private images or sexual exploitation or solicitation – we’ll direct them to local child safety helplines where available.

Fighting Sextortion Scams Across the Internet

In November, we announced we were founding members of Lantern, a program run by the Tech Coalition that enables technology companies to share signals about accounts and behaviors that violate their child safety policies.

This industry cooperation is critical, because predators don’t limit themselves to just one platform – and the same is true of sextortion scammers. These criminals target victims across the different apps they use, often moving their conversations from one app to another. That’s why we’ve started to share more sextortion-specific signals to Lantern, to build on this important cooperation and try to stop sextortion scams not just on individual platforms, but across the whole internet.

*****************************************************************************************

The preceding article was previously published by Instagram here: (Link)

Social Media Platforms

Social Media platforms still lagging on critical LGBTQ+ protections

All Social Media platforms should have policy prohibitions against harmful so-called “Conversion Therapy” content

By Leanna Garfield & Jenni Olson | NEW YORK – GLAAD, the world’s largest lesbian, gay, bisexual, transgender, and queer (LGBTQ) media advocacy organization released new reports documenting the current state of two important LGBTQ safety policy protections on social media platforms.

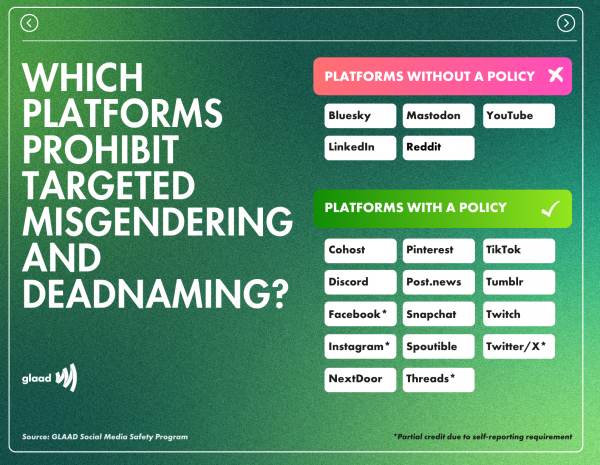

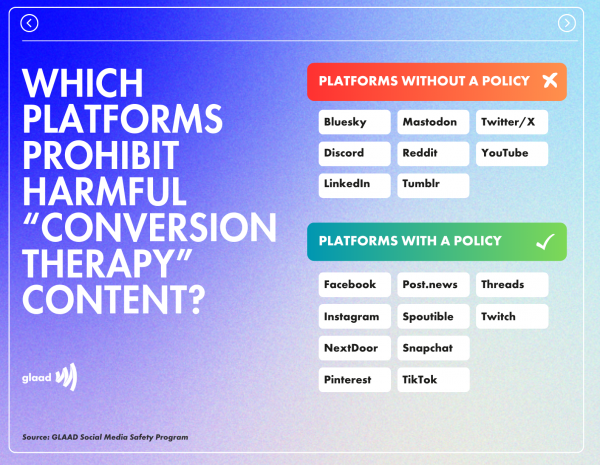

The reports show how numerous platforms and apps (including, most recently Snapchat) are increasingly adopting two LGBTQ safety protections that GLAAD’s Social Media Safety Program advocates as best practices for the industry: firstly, expressly stated policies prohibiting targeted misgendering and deadnaming of transgender and nonbinary people (i.e. intentionally using the wrong pronouns or using a former name to express contempt); and secondly, expressly stated policies prohibiting the promotion and advertising of harmful so-called “conversion therapy” (a widely condemned practice attempting to change an LGBTQ person’s sexual orientation or gender identity which has been banned or restricted in dozens of countries and US states).

Major companies that have such LGBTQ policy safeguards include: TikTok, Twitch, Pinterest, NextDoor, and now Snapchat.

Companies lagging behind and failing to provide such protections include: YouTube, BlueSky, LinkedIn, Reddit, and Mastodon. X/Twitter and Meta’s Instagram, Facebook, and Threads have received partial credit due to “self-reporting” requirements.

“Now is the time for all social media platforms and tech companies to step up and prioritize LGBTQ safety,” said GLAAD President and CEO Sarah Kate Ellis. “We urge all social media platforms to adopt, and enforce, these policies and to protect LGBTQ people — and everyone.”

Companies will have another opportunity to be acknowledged for updating their policies later this year. To be released this summer, GLAAD’s annual Social Media Safety Index report will feature an updated version of the charts.

Conversion Therapy

The widely debunked and harmful practice of so-called “conversion therapy” falsely claims to change an LGBTQ person’s sexual orientation, gender identity, or gender expression, and has been condemned by all major medical, psychiatric, and psychological organizations including the American Medical Association and American Psychological Association. Globally, there has been a growing movement to ban “conversion therapy” at the national level. As of February 2024, 14 countries have such bans, including Canada, France, Germany, Malta, Ecuador, Brazil, Taiwan, and New Zealand. In the United States, 22 states and the District of Columbia have restrictions in place.

Expressing concurrence with GLAAD’s Social Media Safety Program guidance, IFTAS (the non-profit supporting the Fediverse moderator community) stated in a February 2024 announcement: “Due to the widespread and insidious nature of expressing anti-transgender sentiments in bad faith, it’s imperative to have specific policy addressing this issue.” Further explaining the rationale behind such policies, the IFTAS announcement continues: “This approach is considered a best practice for two key reasons: it offers clear guidance to users, and it assists moderators in recognizing and understanding the intent behind such statements. It’s important to reiterate that the focus is not about accidentally getting someone’s pronouns wrong. Rather, our concern centers on deliberate and targeted acts of hate and harassment.”

Conveying appreciation to companies but also highlighting the need for policy enforcement, GLAAD’s new reporting notes that while the policies mark significant progress: “These new policy additions do not solve the extremely significant other related issue of policy enforcement (a realm in which many platforms are known to be doing a woefully inadequate job).”

There is broad consensus and building momentum toward protecting LGBTQ people, and especially LGBTQ youth, from this dangerous practice. However, “conversion therapy” disinformation, extremist scare-tactic narratives, and the profit-driven promotion of such services continues to be widespread on social media platforms, via both organic content and advertising. And, as a December 2023 Trevor Project report reveals, “conversion therapy” continues to happen in nearly every US state.

Thankfully, more tech companies and social media platforms are taking leadership to address the spread of content that promotes and advertises “conversion therapy.” In December 2023, the social platform Post added an express prohibition of such content to their policies, and in January 2024 Spoutible did the same. That same month, in response to key stakeholder guidance from GLAAD, IFTAS (the non-profit supporting the Fediverse moderator community) crafted sample policy language and implemented an “IFTAS LGBTQ+ Safety Server Pledge” system for the Fediverse, in which servers can sign-on confirming they have incorporated a policy prohibiting both the promotion of “conversion therapy” content and targeted misgendering and deadnaming. In February, Snapchat also added both prohibitions into their Hateful Content and Harmful False or Deceptive Information community guidelines policies.

GLAAD President and CEO Sarah Kate Ellis acknowledged this recent progress, saying to The Advocate: “Adopting new policies prohibiting so-called ‘conversion therapy’ content puts these companies ahead of so many others. GLAAD urges all social media platforms to adopt, and enforce, this policy and protect their LGBTQ users.”

A January 2024 report from the Global Project on Hate & Extremism (GPAHE) illuminates how many social media companies and search engines are failing to mitigate harmful content and ads promoting “conversion therapy.” The report outlines the enormous amount of work that needs to be done, and offers many examples of simple solutions that platforms can and should urgently implement. Recommendations from the report are listed below.

In February 2022, GLAAD worked with TikTok to have the platform add an explicit prohibition of content promoting “conversion therapy.” TikTok updated its community guidelines to include the following: “Adding clarity on the types of hateful ideologies prohibited on our platform. This includes … content that supports or promotes conversion therapy programs. Though these ideologies have long been prohibited on TikTok, we’ve heard from creators and civil society organizations that it’s important to be explicit in our Community Guidelines.”

In 2022, GLAAD also urged both YouTube and Twitter (now X) to add an express prohibition of “conversion therapy” into their content and ad guidelines. While X does not currently have such a policy, YouTube, with the assistance of its AI systems, does mitigate “conversion therapy” content by showing an information panel from the Trevor Project that reads: “Conversion therapy, sometimes referred to as ‘reparative therapy,’ is any of several dangerous and discredited practices aimed at changing an individual’s sexual orientation or gender identity.” However, unlike TikTok and Meta, YouTube does not include an explicit prohibition in its Hate Speech Policy.

Meta’s Facebook and Instagram (and by extension Threads which is guided by Instagram’s policies) currently do have such a prohibition (against: “Content explicitly providing or offering to provide products or services that aim to change people’s sexual orientation or gender identity.”). However it is listed separately from the company’s standard three tiers of content moderation consideration as requiring, “additional information and/or context to enforce.” GLAAD has recommended that it be elevated to a higher priority tier. In addition to this content policy, Meta’s Unrealistic Outcomes ad standards policy also prohibits: “Conversion therapy products or services. This includes but is not limited to: Products aimed at offering or facilitating conversion therapy such as books, apps or audiobooks; Services aimed at offering or facilitating conversion therapy such as talk therapy, conversion ministries or clinical therapy; Testimonials of conversion therapy, specifically when posted or boosted by organizations that arrange and provide such services.”

Among other platforms, it is notable that the community guidelines of both Pinterest and NextDoor include a prohibition against content promoting or supporting “conversion therapy and related programs.” While Twitch’s community guidelines expressly state that: “regardless of your intent, you may not: Encourage the use of or generally endorsing sexual orientation conversion therapy.” As mentioned above, Post and Spoutible also have amended their policies, with Spoutible’s new guidelines being the most extensive:

Prohibited Content: Any content that promotes, endorses, or provides resources for ‘conversion therapy.’ Content that claims sexual orientation or gender identity can be changed or ‘cured.’ Advertising or soliciting services for ‘conversion therapy.’ Testimonials supporting or promoting the effectiveness of ‘conversion therapy.’

Spoutible’s policy also thoughtfully outlines these exceptions:

Content that discusses ‘conversion therapy’ in a historical or educational context may be allowed, provided it does not advocate for or glorify the practice. Personal stories shared by survivors of ‘conversion therapy,’ which do not promote the practice, may be permissible.

In addition to GLAAD’s advocacy efforts advising platforms to add prohibitions against content promoting “conversion therapy” to their community guidelines, we also urge these companies to effectively enforce these policies.

To clarify even further, all platforms should add express public-facing language prohibiting the promotion of “conversion therapy” to both their community guidelines and advertising services policies. While some platforms have described off-the-record that “conversion therapy” material is prohibited under the umbrella of other policies — policies prohibiting hateful ideologies, for instance — the prohibition of “conversion therapy” promotion should be explicitly stated publicly in their community guidelines and other policies.

When such content is reported, it’s also important for moderators to make judgments about the content in context, and distinguish between harmful content promoting “conversion therapy” versus content that mentions or discusses “conversion therapy” (i.e. counter-speech). As a 2020 Reuters story details, social media platforms can provide a space for “conversion therapy” survivors to share their experiences and find community.

GLAAD also urges all platforms to review and follow the below recommendations from the Global Project on Hate & Extremism (GPAHE):

To protect their users, tech companies must:

- Use common sense when evaluating whether content violates rules on conversion therapy and remember that it is dangerous, and sometimes deadly, to allow pro-conversion therapy material to surface. It is quintessential medical disinformation.

- Invest in non-English, non-American cultural and language resources. The disparity in the findings for non-English users is stark.

- Elevate authoritative resources in the language being used for the terms found in the appendix.

- Incorporate “same-sex attraction” and “unwanted same-sex attraction” into their algorithm that moderates conversion therapy content and elevate authoritative content.

- Create or expand the use of authoritative information boxes about conversion therapy, preferably in the language being used.

- All online systems must keep up with the constant rebranding and use of new terms, in all languages, that the conversion therapy industry uses.

- Refrain from defaulting to English content in non-English speaking countries where possible, and if this is the only content available it must be authoritative and translatable.

- All companies must avail themselves of civil society and subject matter experts to keep their systems current.

- Additional recommendations from previous GPAHE research.

Source: Conversion Therapy Online: The Ecosystem in 2023 (Global Project Against Hate & Extremism, Jan 2024)

An earlier version of this overview first appeared in Tech Policy Press and was adapted from the 2023 GLAAD Social Media Safety Index report. The next report is forthcoming in the summer of 2024.

The preceding article was previously published by GLAAD and is republished by permission.

-

Movies1 day ago

Movies1 day agoControversial ‘Blue Film’ pushes past taboos for gripping drama

-

Celebrity News5 days ago

Celebrity News5 days agoOutright International honors Cyndi Lauper at annual NYC gala

-

Politics4 days ago

Politics4 days agoLos Angeles Primary Election Day results are in

-

Pride Special3 days ago

Pride Special3 days agoYour quick guide to West Hollywood Pride 2026

-

Congress3 days ago

Congress3 days agoOgles faces bipartisan backlash over anti-gay social media post

-

National5 days ago

National5 days agoResults from key Tuesday primary races

-

AIDS and HIV5 days ago

AIDS and HIV5 days agoAIDS Healthcare Foundation announces 3 million people globally in its care

-

Pentagon4 days ago

Pentagon4 days agoFederal appeals court rules White House illegally banned trans troops

-

Pride Special3 days ago

Pride Special3 days agoKathy Hilton out as Weho Pride’s Grand Marshal, title to remain empty this year

-

News3 days ago

News3 days agoGallup finds LGBTQ+ support among Americans is dropping